Voice Cloning: Practical Steps to Capture Benefits Without the Risk

30/1/2026

Problem: Voice cloning can make services faster, more personal, and more accessible—but it also creates real risks: convincing deepfakes, fraudulent impersonation, and privacy violations that erode trust.

Agitate: Without clear safeguards, those risks become costly. Customers can be scammed, protected speech can be misused, and organizations face legal exposure and reputational damage. Small pilots can spiral into large-scale abuse if consent, detectability, and controls are missing.

Solution: Design voice projects around tight scope, clear consent, and measurable safety so you capture benefits without accepting undue risk. Practical steps include:

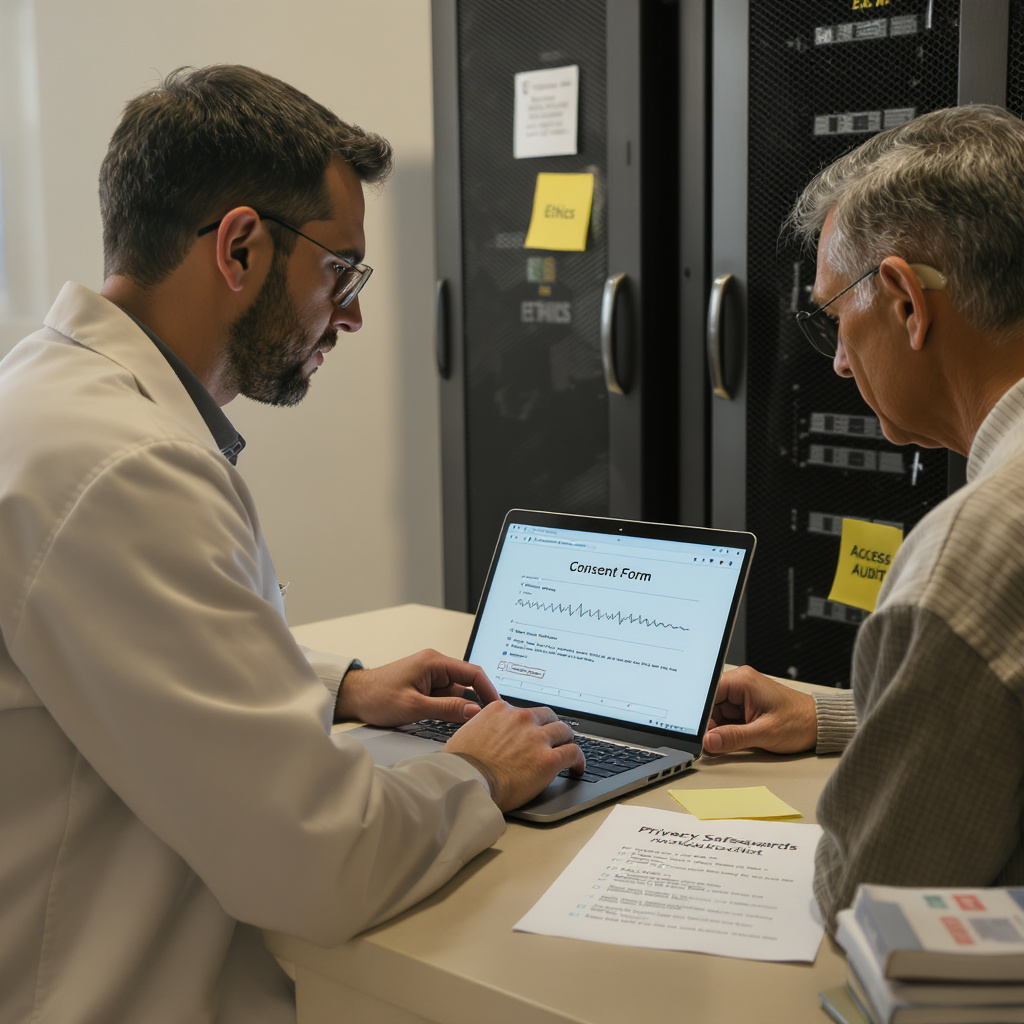

- Consent protocols: Record who agreed, when, and for which uses; allow revocation and keep auditable logs.

- Detectability: Embed watermarks and provenance metadata to flag synthetic audio for platforms and detectors.

- Authentication: Use signed assets, role-based API controls, and optional speaker verification so only authorized systems synthesize a voice.

- Controlled pilots: Time-box experiments (4–8 weeks), limit voices and channels, include human review, and define stop/gate criteria.

- Measure everything: Track MOS, WER, CSAT, false-acceptance rates, and incident counts to evaluate benefit and safety.

Practical governance: Combine legal review, security practices (key management, provenance), cross-functional checkpoints, and an ethics board for sensitive cases. Publish anonymized findings and use independent audits where appropriate.

Run small, measurable pilots that prioritize consent and detectability—so voice AI improves accessibility and efficiency while keeping people and trust front and center.