TinyML & Edge AI: Practical Pillar Post with Cluster Topics

4/2/2026

Pillar post strategy

This pillar post gives a practical, measured guide to TinyML and Edge AI, paired with a set of shorter cluster posts that dive into the technical and product subtopics. The pillar summarizes core concepts, design tradeoffs, and deployment best practices; the cluster posts provide hands‑on how‑tos, benchmarks, and checklists you can link from product pages or tutorials to build topical authority and improve internal SEO linking.

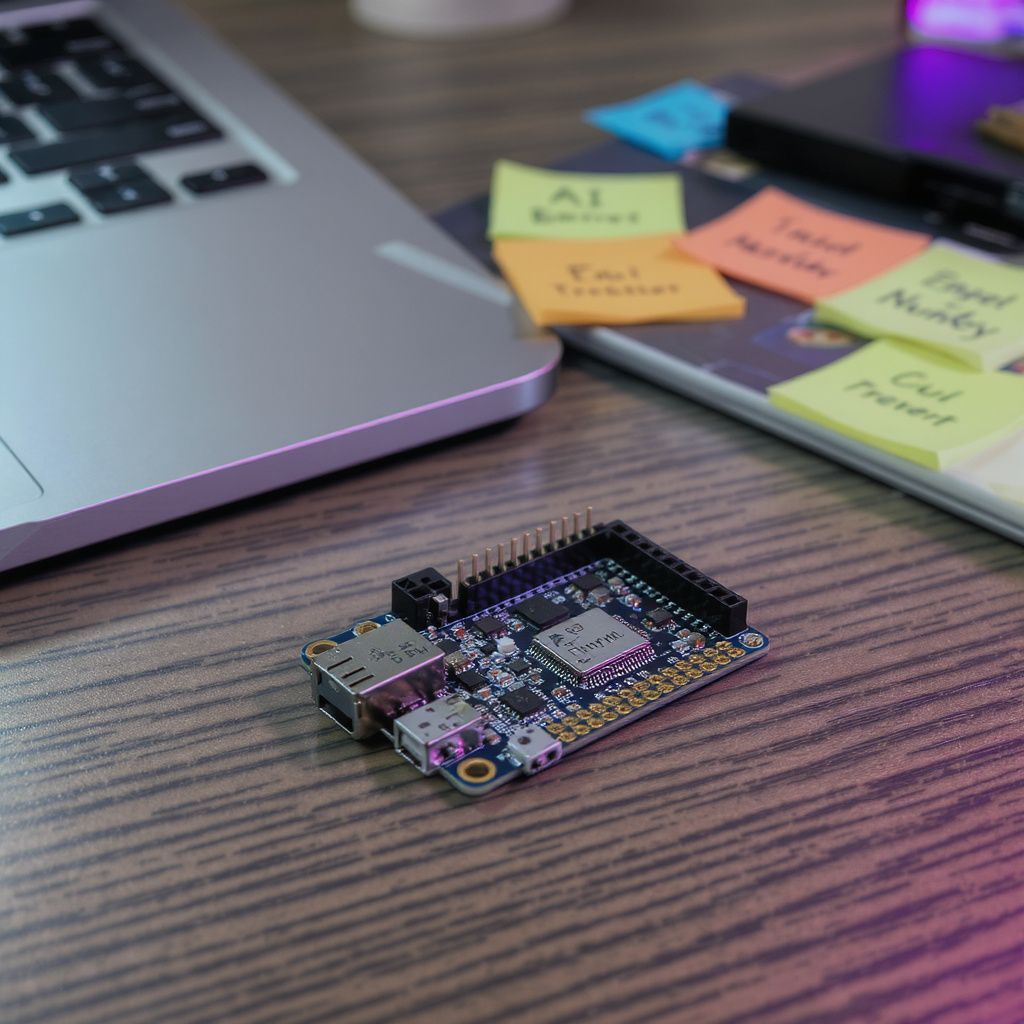

What TinyML and Edge AI mean in practice

TinyML refers to machine learning models small enough to run on microcontrollers. Edge AI means running inference on devices near the data source rather than relying on remote servers. Together they enable low‑latency, privacy‑preserving, and low‑bandwidth features for everyday products — from wake‑word detection on earbuds to vibration‑based pump monitoring in factories.

Core technical tactics

- Model compression: pruning, distillation, and compact architectures to reduce size while retaining behavior.

- Quantization: 8‑bit or mixed precision to cut memory and speed inference, balanced against measured accuracy loss.

- Efficient layers: depthwise separable convolutions, tiny recurrent blocks, and carefully chosen feature sets.

Hardware and runtime considerations

Microcontrollers impose tight flash/RAM and power budgets. Choose hardware by role (Cortex‑M for ultra‑low power, tiny NPUs/gateways for heavier inference) and benchmark on the target board. Prioritize measured power draw, actual memory usage including stack/heap, and required peripherals over theoretical MHz or GFLOPS.

Power, sensing, and duty cycling

Extend battery life by running cheap heuristics or low‑power sensors to trigger full inference, batching inputs when possible, and using deep sleep states. Measure energy per inference and duty cycles on hardware‑in‑the‑loop rather than relying solely on simulations.

Privacy, data minimization, and operational design

Keep raw audio, images, and health signals local when possible. Transmit compact features or anonymized events only. Design intermittent cloud sync for updates and analytics, and implement secure OTA, atomic updates, staged rollouts, and rollback paths for safe model lifecycle management.

Data, robustness, and testing

Collect representative edge data across seasons, users, and device placements. Use targeted augmentation and active‑learning loops for labeling. Include environmental tags so you can slice performance by condition. Plan calibration routines, confidence checks, and safe fallback behaviors for low‑confidence cases.

Metrics and piloting

Start small with focused pilots and clear KPIs: latency (median and p95), accuracy with class‑level error breakdowns, energy per event, and memory margins. Use A/B tests or side‑by‑side device comparisons, and translate technical KPIs into business outcomes like reduced cloud costs, uptime improvements, or payback period.

Security, compliance, and fairness

Implement secure boot, signed firmware, and encrypted data at rest and in transit. In regulated domains, involve legal and compliance early and document datasets and model versions. Test models across representative populations and device conditions to avoid biased behavior.

Prototype to scale workflow

- Collect representative sensor data and annotate edge cases.

- Train and compress models offline, then test quantized models on hardware.

- Benchmark latency, memory, and energy on dev kits; iterate before custom hardware.

- Deploy pilots with compact telemetry, staged OTA, and rollback safety nets.

Cluster posts (shorter, linkable subtopics)

- Cluster: Model Compression & Quantization — Hands‑on guide to pruning, distillation, and 8‑bit/mixed precision quantization with code snippets and expected accuracy tradeoffs. KPI: model size, top‑1/false positive delta, and inference time on Cortex‑M.

- Cluster: Low‑Power Sensing Patterns — Architectures for duty cycling, event triggers, and sensor fusion that minimize wakeups. KPI: energy per day and energy per inference under representative duty cycles.

- Cluster: On‑Device Privacy & Data Minimization — Patterns for feature extraction, local aggregation, and transparency controls. KPI: bytes sent per device per day and retention policy compliance.

- Cluster: Hardware Selection & Benchmarking — How to pick microcontrollers, tiny NPUs, and dev kits; measurement methodology for latency, memory, and power. KPI: median/p95 latency, RAM/flash headroom, and watts per inference.

- Cluster: Pilot Checklist & Acceptance Tests — A reproducible test harness, acceptance criteria, and sample telemetry schema for pilots. KPI: pass/fail against latency, accuracy, and battery impact thresholds.

- Cluster: Security, OTA, and Compliance — Practical secure‑boot, signed model updates, and staged rollouts with rollback. KPI: successful staged update rate and rollback time under failure.

How to use this pillar and clusters

Use the pillar as the central reference and link each cluster post from product pages, tutorials, and documentation. Keep clusters short and actionable so they solve specific search intents, while the pillar covers strategy and cross‑cutting tradeoffs. Together they form a topic hub that helps readers find both high‑level guidance and hands‑on implementation details.

Next steps

Start with a single pilot: pick a focused capability, set KPIs, prototype on dev kits, measure on real hardware, and iterate. For hands‑on validation consider vendor or community demo kits and consult primary vendor docs and peer‑reviewed case studies when quoting performance numbers.