Rewrite: Inverted Pyramid workflow for safe deep-space AI autonomy

29/4/2026

Main point: Design AI for deep-space autonomy as bounded, evidence-driven workflows (not a black box) so it can recommend actions safely under power limits, noisy sensors, latency, radiation risk, and domain shift.

TL;DR

- AI can help with key spacecraft tasks: planning, navigation, anomaly detection, and science targeting—when wrapped in guardrails.

- Use a repeatable workflow pattern: inputs → confidence/uncertainty → policy/guardrails → safe recommendation or escalation → operator feedback.

- Validate safety with scenario-based acceptance tests that prove correct behavior during degraded and out-of-distribution conditions.

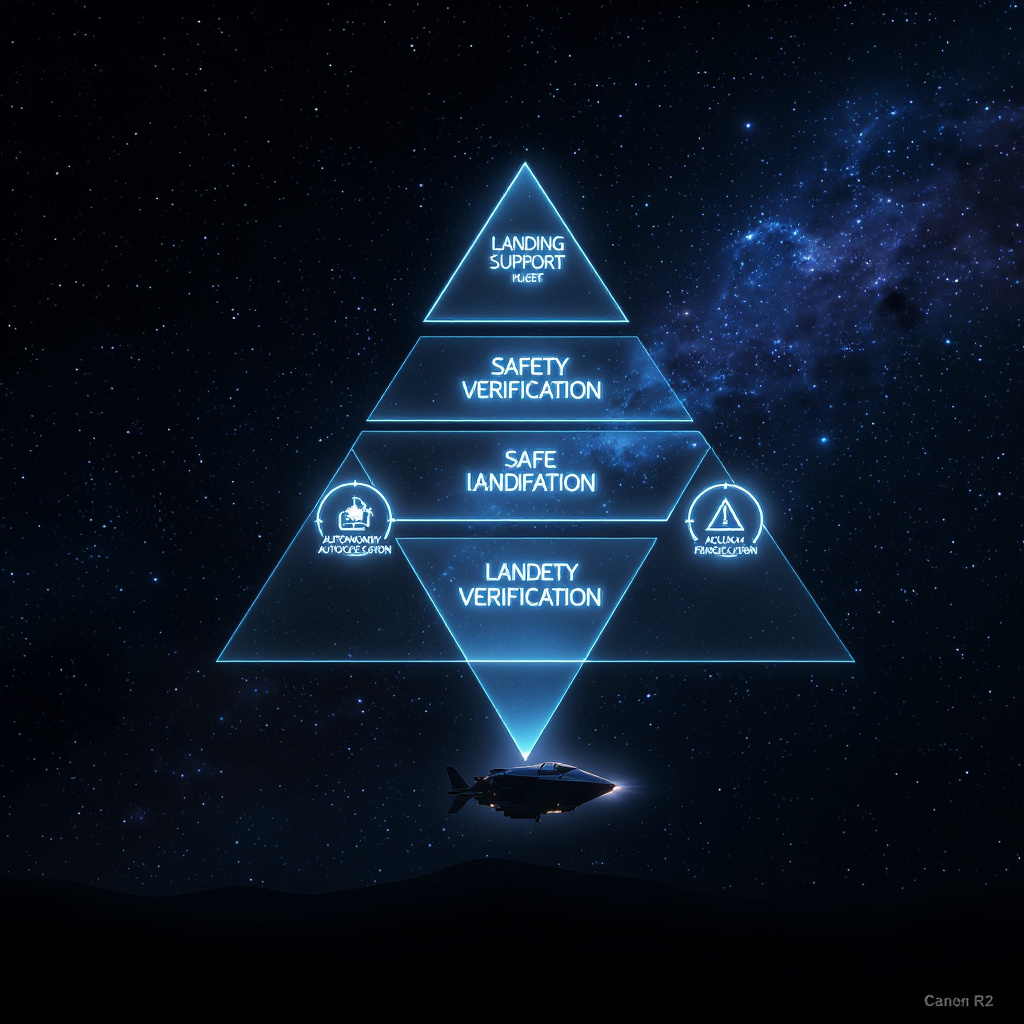

How to apply it (example: autonomous landing support)

- Objective & bounded action space: Define what the AI may recommend (e.g., hold attitude, adjust descent rate, select safe corridor, request operator review).

- Inputs: Latest navigation state (position/velocity), sensor health, planned trajectory segments, fuel margins, and environment/terrain hazard data.

- State estimation with uncertainty: Produce estimates plus a confidence/uncertainty score for every critical quantity.

- Gating before action: Check anomaly signatures (drift, residual patterns) and confirm sensor validity (calibration freshness, dropout rates, telemetry sanity ranges).

- Policy decision ladder: Map uncertainty to actions:

- Low uncertainty → recommend the next bounded maneuver.

- Medium uncertainty → recommend a smaller/reversible correction.

- High uncertainty / OOD → hold/safe mode or request operator review.

- Safety validation: Enforce hard rules (actuator/thrust limits, corridor geometry, forbidden commands). If a rule fails, escalate—don’t improvise.

- Operator loop: Send a concise rationale (what changed, what the model predicted, confidence, and which guardrail triggered the action). Log approvals/overrides.

- Monitoring & learning: Track drift (input validity, uncertainty calibration, anomaly detector false alarms, inference latency). Retrain/updates only offline with approval and rollback triggers.

What makes this credible (evidence-driven acceptance)

- Define a “workflow contract”: for each step, specify inputs, outputs, thresholds, allowed actions, and safe fallbacks.

- Prove safety with a scenario matrix: include nominal cases and stress cases (sensor dropouts, timing jitter, radiation bit flips, unexpected dynamics, OOD sensor/scene patterns).

- Acceptance criteria should target safe behavior: correct recommendations and correct safe fallbacks when confidence drops.

Top 3 next actions

- Write the one-page workflow contract for autonomous landing: inputs → outputs → guardrails → safe fallbacks.

- Build an acceptance test scenario pack that explicitly checks escalation timing and “no unsafe command” behavior under worst-case degraded inputs.

- Implement operator-facing rationale + logging so every recommendation is traceable to confidence, gating checks, and the exact policy rule that fired.

Key caution: Don’t optimize for “best accuracy” in clean data. In critical autonomy, the real win is staying predictable and safe under uncertainty spikes, domain shift, and sensor/latency faults.

Optional references to ground the approach (verify exact metrics in your chosen sources)

- NASA/JPL autonomy & EDL resources: for mission-grade autonomy and validation mindset.

- Quionero-Candela et al. (2009) on domain adaptation:

- NIST AI Risk Management Framework: for governance/monitoring/testing discipline.