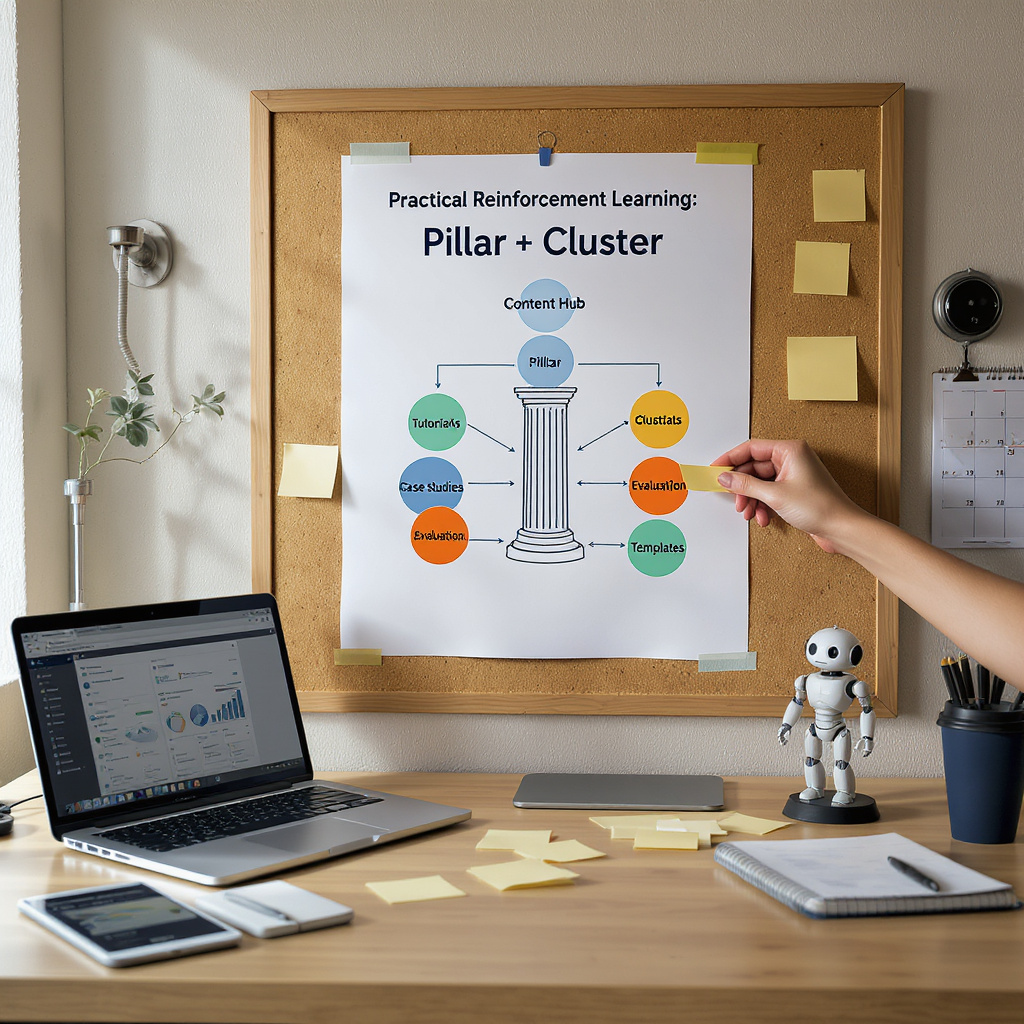

Practical Reinforcement Learning: Pillar + Cluster Content Hub

14/3/2026

Pillar post overview — Purpose and approach

This pillar post explains practical reinforcement learning (RL) for operations and outlines a Pillar + Cluster (Topic Hub) content strategy. The pillar gives a thorough, actionable overview of RL use in production while the cluster posts link to focused guides on specific subtopics. This structure builds authority, improves internal linking for SEO, and helps readers find both broad guidance and deep, tactical content.

Core concepts made practical

Agent & environment: The agent makes decisions and the environment is what it acts on (e.g., a warehouse robot vs a fulfillment floor, or a recommendation engine vs website users). Keep examples concrete so non‑technical stakeholders connect concepts to operations.

State, action, reward: State = what the agent observes; action = its choices; reward = the objective signal. Design rewards to map directly to business KPIs, include safety/cost penalties, and consult domain experts to avoid reward gaming.

Policy & learning: A policy maps observations to actions. Algorithms fall into families (value‑based, policy‑gradient, actor‑critic) and into learning styles (model‑free vs model‑based, on‑policy vs off‑policy). Choose methods that match your action space, sample budget and safety tolerance.

Deployment & risk control

Favor staged rollouts: simulators and offline RL for safe iteration, shadow mode for direct comparison, canary releases and conservative safety envelopes for live deployments. Monitor distribution shift, keep fallback policies, and require human override and automated rollback triggers.

Measurement and validation

Use clear KPIs and rigorous evaluation: A/B tests, holdout baselines, logged trajectories, confidence intervals, and operational metrics (latency, cost, anomaly rates). Track sample efficiency and monitor for unintended behavior with counterfactuals and adversarial probes.

Ethics, compliance and governance

Embed fairness checks, data minimization, and documented testing. Consult industry regulations early for sectors like healthcare, finance, and transport and obtain independent audits where required.

From idea to pilot

Start with a focused discovery workshop to map decision flows and KPIs, build a simulator or curate offline logs, run shadow mode, and use staged expansion with pre-defined stop/go criteria. Assemble a compact cross‑functional team: data engineers, ML engineers, domain experts and a product lead.

Cluster posts — focused, linkable assets

- Reward Design: Practical Patterns — Concrete examples of reward functions, anti‑gaming guardrails, and checklists for aligning rewards to KPIs.

- Sim‑to‑Real Transfer Techniques — Domain randomization, system identification, transfer tests, and a checklist for validation before live rollout.

- Pilot Playbook — Step‑by‑step guide: discovery, simulator design, shadow mode, A/B testing, rollout gating and stop criteria.

- Monitoring & Ops for RL — Metrics, dashboards, drift detection, retraining triggers and fallback policy management.

- Algorithm Selection Guide — How to pick model‑free vs model‑based, on‑policy vs off‑policy, and sample‑efficient choices for discrete vs continuous actions.

- Case Studies & Evidence — Summaries and links to vetted deployments (datacenter cooling, robotics, personalization) with notes on what was production vs simulation.

- Safety, Ethics & Compliance Checklist — Sector‑specific controls, privacy suggestions and audit evidence templates.

- Tooling, Infrastructure & Costing — Libraries, simulation platforms, compute/storage estimates and reproducible pipeline patterns.

SEO and internal linking guidance

Make the pillar the canonical overview and link each cluster post back to relevant pillar sections; from the pillar, link forward to each cluster. Use clear, keyword‑rich anchor text in the cluster titles and keep cluster posts short, focused and optimized for long‑tail queries that support the pillar’s authority.

Next step offer

Run a one‑day discovery workshop to map decision flows and KPIs, followed by a 4–8 week pilot producing a reproducible prototype, evaluation plan and stop/go criteria. These deliverables create defensible evidence you can use to decide whether to scale RL in production.

Quick tip — When citing numeric improvements, always preserve the original context: production vs pilot vs simulation, sample size, baseline and evaluation period—transparency builds credibility and supports adoption decisions.